________________________________________

________________________________________

Rafa Spoladore Ψ

Shared posts

LibreOffice and OpenOffice clash over user numbers (OStatic)

Susan Linton at OStatic reports on both sides of a dispute between LibreOffice and Apache OpenOffice project members over LibreOffice's download numbers. Rob Weir of OpenOffice challenged the LibreOffice statistics as "puffery" earlier in the week, claiming higher download rates for OpenOffice. Italo Vignoli of LibreOffice responded to the charge with a list of large organizations that have switched to LibreOffice, suggesting Weir was "scared by their success" — which, in turn, prompted another round of replies from each corner. Inter-project rivalries aside, the debate highlights the persistent difficulty of enumerating open source users. Who said statistics were boring?

Journey towards idiocracy may have begun 2,000 years ago

The U.S. president in 2505 AD

Now, the geneticist Gerald Crabtree of Stanford has published two papers in Trends in Genetics:

Our fragile intellect. Part IHe argues that the decline isn't starting now; it began 2,000-6,000 years ago.

Our fragile intellect. Part II.

The Guardian offers a clear summary of the papers:

Is pampered humanity getting steadily less intelligent?They're purely theoretical papers but they contain much more than an unjustified guess. The author tries to quantify the number of genes responsible for the intelligence and the degree of selection that the lifestyles at various moments of the human history imposed on the genes.

Because the punishment for low intelligence was arguably severe and mostly lethal when humans were hunterers, people were smarter. The decline is linked to the appearance of agriculture. Well, in some regions, the agricultural revolution already began with the neolithic era, perhaps 20,000 years ago. But there are other complaints one could invent.

In particular, the choice of agriculture as the main culprit seems a bit arbitrary. There are lots of other achievements that have both simplified human lives as well as encouraged the people to be ever less intelligent. After all, agriculture also needs some thinking. In my opinion, nothing like agriculture or steam engine or computers can match the crippling effect that the modern welfare states and political correctness have on the genetic quality of the human population when it comes to the intelligence.

The timing is subtle and hard to measure and there may also be "different types of intelligence" that peaked at different moments. However, I think that the most universal assertion of the papers, namely that there is a point ("peak IQ") at which the people's intelligence starts to decline, has to exist, is valid.

I've been often thinking about the true intelligence of folks like Isaac Newton – who was arguably the smartest famous man who was ever walking on the surface of the globe. I included the word "famous" because it seems very plausible to me that there have been smarter folks who just weren't as lucky as Newton so they didn't make the same impact. But if one focuses on the famous folks, Newton was arguably #1.

It's interesting to guess how quickly Isaac Newton would be able to learn quantum mechanics, general relativity, string theory, and all the things needed to become the #1 physicist in the world of 2012 who would also discover new things we are unable to find. It seems more likely than not that he would have the potential to become a top physicist, I am slightly less certain that he would be considered #1 – of course, the folks who judge others may often be wrong – but it's also plausible that he would have trouble with the new physics in a similar way as Albert Einstein had trouble with quantum physics (and there are other examples). Some great personalities may have only been given the potential to lead a particular revolution or two but not "any revolution".

There are many theoretical questions about the actual behavior and evolution of the mankind's IQ distribution. And there are also many practical questions whether it matters and whether something should be done about it – and what should be done about it if the answer is Yes. I am agnostic about it. Any policies of this kind that would try to intervene into people's reproductive life look repulsive to me. On the other hand, if I were assured that the mankind would converge to the middle between current humans and chimps within 150 years, of course that I would prefer some mitigation policies. ;-)

What do you think about those matters?

How Much Did The FBI Snoop On Email Messages To Uncover The Petreaus Situation?

Under the 1986 Electronic Communications Privacy Act, federal authorities need only a subpoena approved by a federal prosecutor — not a judge — to obtain electronic messages that are six months old or older. To get more recent communications, a warrant from a judge is required. This is a higher standard that requires proof of probable cause that a crime is being committed.But even that isn't entirely clear. Folks like Julian Sanchez have been puzzling through the timeline of events and wondering how a simple investigation into a small number of "rude" (but not illegal) emails then uncovered thousands of questionable emails involving a different general as alleged in the news that broke last night. It feels like the FBI may have taken a simple report of misconduct (which may have been driven by another love triangle issue involving an FBI agent who seemed to take the whole thing a lot more personally than makes sense) and turned it into a massive fishing expedition.

Given how fast new parts of this story keep breaking, I'm sure there are still a number of other dominoes to fall, but hopefully this actually gets people to pay attention to just how easy it is for law enforcement to snoop on people's emails these days based on next to nothing.

Permalink | Comments | Email This Story

Pontos corridos deixaram Brasileirão com cara de Rio-São Paulo

2012 marcou a décima edição do Campeonato Brasileiro em pontos corridos. Mesmo sofrendo críticas dos saudosistas do mata-mata, a fórmula inaugurada em 2003 “vingou” e tem se mostrado benéfica, principalmente porque obriga os clubes a ter uma melhor organização e planejamento. No entanto, nos 10 certames realizados até aqui, uma coisa já ficou clara: o Brasileirão em pontos corridos é um Rio-SP de luxo.

2012 marcou a décima edição do Campeonato Brasileiro em pontos corridos. Mesmo sofrendo críticas dos saudosistas do mata-mata, a fórmula inaugurada em 2003 “vingou” e tem se mostrado benéfica, principalmente porque obriga os clubes a ter uma melhor organização e planejamento. No entanto, nos 10 certames realizados até aqui, uma coisa já ficou clara: o Brasileirão em pontos corridos é um Rio-SP de luxo.

Senão, vejamos:

De 2003 pra cá, tivemos 6 campeões diferentes: Cruzeiro (2003), Santos (2004), Corinthians (2005 e 2011), São Paulo (2006, 2007 e 2008), Flamengo (2009) e Flu (2010 e 2012). Ou seja, tirando o Cruzeiro, o primeirão, cariocas e paulistas vêm dominando completamente a parada.

Mas não é todo carioca ou paulista que é melhor que times de outros estados, como a própria tabela desse ano mostra. O problema é que os melhores de Rio e SP, ainda são melhores que os melhores dos outros lugares. E isso fica evidente quando todos são obrigados a jogar 38 partidas, mais um monte de outras pela Libertadores, Copa do Brasil e Sul-americana. Se não tiver um grupo grande e com o mínimo de qualidade, não ganha campeonato. Com menos do que isso, pode até descolar uma vaguinha na Liberta ou, pros mais humildes, se manter na primeira divisão, mas não ganha título. E essa é uma realidade que fica mais evidente a cada nova temporada. Dificilmente a gente vai ver acontecer de novo o que aconteceu com o Flamengo, em 2009. Aquilo foi um ponto fora da curva. Tão cedo não se repete. Pontos corridos é uma competição de regularidade. O Flu que o diga.

Como solucionar o problema da soberania carioca e paulista no campeonato, então? Voltando à fórmula antiga? Claro que não! Isso seria o mesmo que tirar o sofá da sala. Não era diferente antes dos pontos corridos. Nas 10 edições anteriores a 2003, nós tivemos os seguintes campeões: Palmeiras (93 e 94); Botafogo (95), Grêmio (96); Vasco (97 e 2000); Corinthians (98 e 99); Atlético-PR (2001) e Santos (2002). Ou seja, 80% dos títulos ficaram ou com algum time do Rio, ou com algum de São Paulo. Não é preciso ser gênio pra perceber que o problema não está na fórmula de disputa.

Não é impossível um clube de fora do “eixo” se intrometer nesse panela. Mas os candidatos terão que ser mais criativos, uma vez que, por mais que se esforcem, jamais poderão competir com cariocas e paulistas no que diz respeito à exposição em mídia, por exemplo. E talvez esse seja o item mais valioso na hora de se sentar pra negociar. Mais exposição, mais dinheiro. Mais dinheiro, mais possibilidade de ir às compras e levar o que tem de melhor no mercado de jogadores. É uma conta simples de se fazer.

Um trabalho de médio pra longo prazo é a única solução. É preciso, por exemplo, formar jogadores e utilizá-los no time de cima, porque isso diminui a necessidade de contratar e ainda ajuda a fazer um caixa, caso o jogador seja negociado. Além disso, eles têm que saber aproveitar o potencial regional que cada um tem, com ações que explorem as características das suas torcidas e tal. O Corinthians aprendeu a fazer isso e, hoje, tem na Fiel a base que sustenta todo o resto. Inter e Grêmio, que em breve terão estádios novinhos em folha – ou quase isso, no caso do Colorado -, no meu modo de ver as coisas, saem um pouquinho à frente de Galo e, principalmente, Cruzeiro, que por mais que gostem e sintam falta do Mineirão, não têm um estádio pra chamar de seu.

Sabendo trabalhar, qualquer fórmula de disputa de campeonato joga a favor. E apesar de ser um defensor ferrenho dos pontos corridos, não vejo o menor problema em misturá-lo com o mata-mata. Se o bando de sanguessugas que assola o futebol nacional aceitasse largar o osso só um pouquinho, se topasse pelo menos cortar pela metade os insignificantes e modorrentos estaduais, já ganharíamos mais dois meses de calendário. Com esse tempo, daria muito bem pra fazer um cruzamento dos 4 primeiros colocados (1o x 4o; 2o x 3o) em jogos de ida e volta. Teríamos 4 jogos de semi-final e duas finais pra decidir o campeão brasileiro. Já pensou? Dá pra fazer. Do jeito que está, não tem como. Mas se pararem pra pensar no que realmente importa pro futebol, aí tem.

Abraços,

Tatu

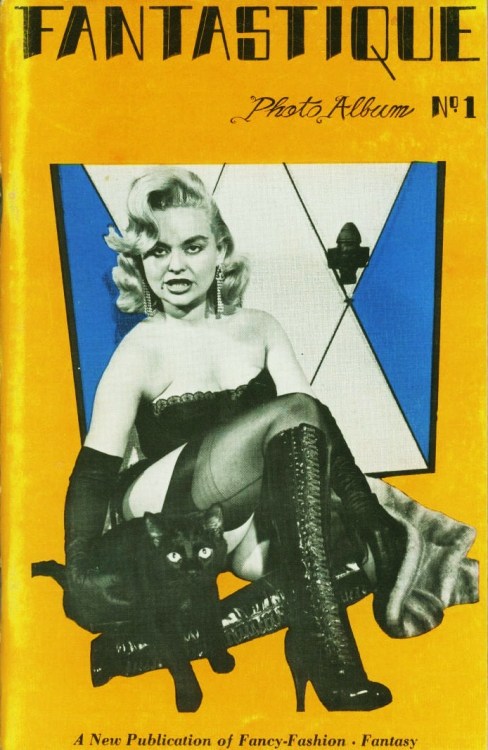

vigorton2: FANTASTIQUE - PHOTO ALBUM #1 A New Publication of...

FANTASTIQUE - PHOTO ALBUM #1

A New Publication of Fancy-Fashion+Fantasy

The kitty is the BEST part ;)) retrogirly~

Superstringy compactifications compatible with the \(B\to\mu^+\mu^-\) decays seen by LHCb

By Gordon Kane, Distinguished University Professor of Physics, University of Michigan and a Lilienfeld Prize winner

Intro by LM: The HCP 2012 conference in Kyoto, Japan began today. Pallab Ghosh of BBC immediately told us that "SUSY has been certainly put in the hospital". This statement boils down to the recently exposed measurements at the LHCb detector of the rarest decay of the B-mesons so far (paper in PDF, public info), namely \(B_s^0\to\mu^+\mu^-\), whose observed branching ratio \(3.2^{+1.5}_{-1.2}\times 10^{-9}\) (about 99% certainty it is nonzero) agrees with the Standard Model's prediction of \((3.54\pm0.30)\times 10^{-9}\). See also a few days old LHCb paper on another decay and texts by Harry Cliff at the Science Museum Discovery blog, Michael Schmitt, Tommaso Dorigo, and Prof Matt Strassler on the muon decay. But what do actual supersymmetric stringy compactifications predict about this decay? Are they dead?

I want to thank Luboš Motl for his interest and for inviting me to summarize our rare decay predictions for the data that is appearing this week. Basically the prediction for supersymmetry based on compactified string/M theories is that any rare decay rate should equal the Standard Model one within an accuracy of a few per cent.

I want to thank Luboš Motl for his interest and for inviting me to summarize our rare decay predictions for the data that is appearing this week. Basically the prediction for supersymmetry based on compactified string/M theories is that any rare decay rate should equal the Standard Model one within an accuracy of a few per cent.Although many string/M theory predictions can not yet be made accurately, some can, in particular the prediction for \(B_s\to \mu^+\mu^-\). The short summary of the argument is that compactified string/M theories have moduli that describe the shapes and sizes of the small dimensions. The moduli fields have quanta, scalar particles, that decay gravitationally so they have long lifetimes. In order to not destroy the successes of nucleosynthesis the moduli have to be heavier than about \(30\TeV\). One can show that the lightest eigenvalue of the moduli mass matrix is connected to the gravitino mass in theories with softly broken supersymmetry, and in turn that in such theories the squark and slepton (and Higgs scalar) masses are essentially equal to the gravitino masses. Thus the squarks and sleptons are heavier than about \(30 \TeV\), and they are predicted to be too heavy to observe at LHC or via the rare decays. The LHCb result agrees with this prediction. While the scalars are too heavy to be seen easily, gluinos and neutralinos and one chargino should be seen at LHC.

LHCb detector coils

A review article summarizing compactified M-theory predictions including those for the \(B_s\to\mu^+\mu^-\), many of which also hold for other corners of string theory, was recently published by Bobby Acharya, Piyush Kumar, and myself,

Compactified String Theories — Generic Predictions for Particle Physics (PDF).The same theory led to the prediction (before the data) of the Higgs boson mass to be \(126\pm 2\GeV\) including all supergravity constraints (details in the review article). Some people used phenomenological arguments to suggest scalars were heavy and thus expected similar results for rare decays. It should be emphasized that our predictions start from the Planck scale M/string theory and derive the results for supersymmetric \(\TeV\) scale theories. Getting the Standard Model result for \(B_s\to \mu^+\mu^-\) is the only prediction derived in a supersymmetric theory, and adds to the evidence for supersymmetry and for M/string theories beginning to become a meaningful predictive and explanatory framework for particle physics and cosmology.

P.S. by LM: On page 26 of the April 2012 review above, you may read that the branching ratio has SUSY contributions going like \((\tan\beta)^6\), but because the result is virtually indistinguishable from the Standard Model, one may only conclude that \(\tan\beta\lessapprox 20\). The upper limit "twenty" may get reduced a bit in the wake of the new LHCb measurement.

When Gotham is ashes, you have my permission to DANCE.

When Gotham is ashes, you have my permission to DANCE.

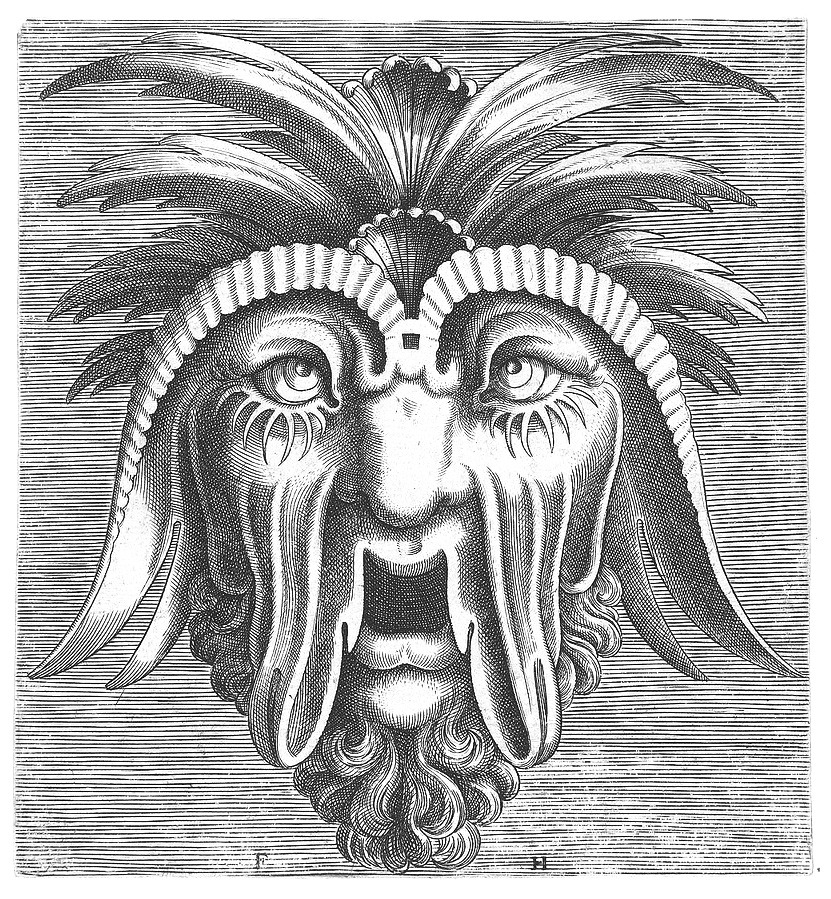

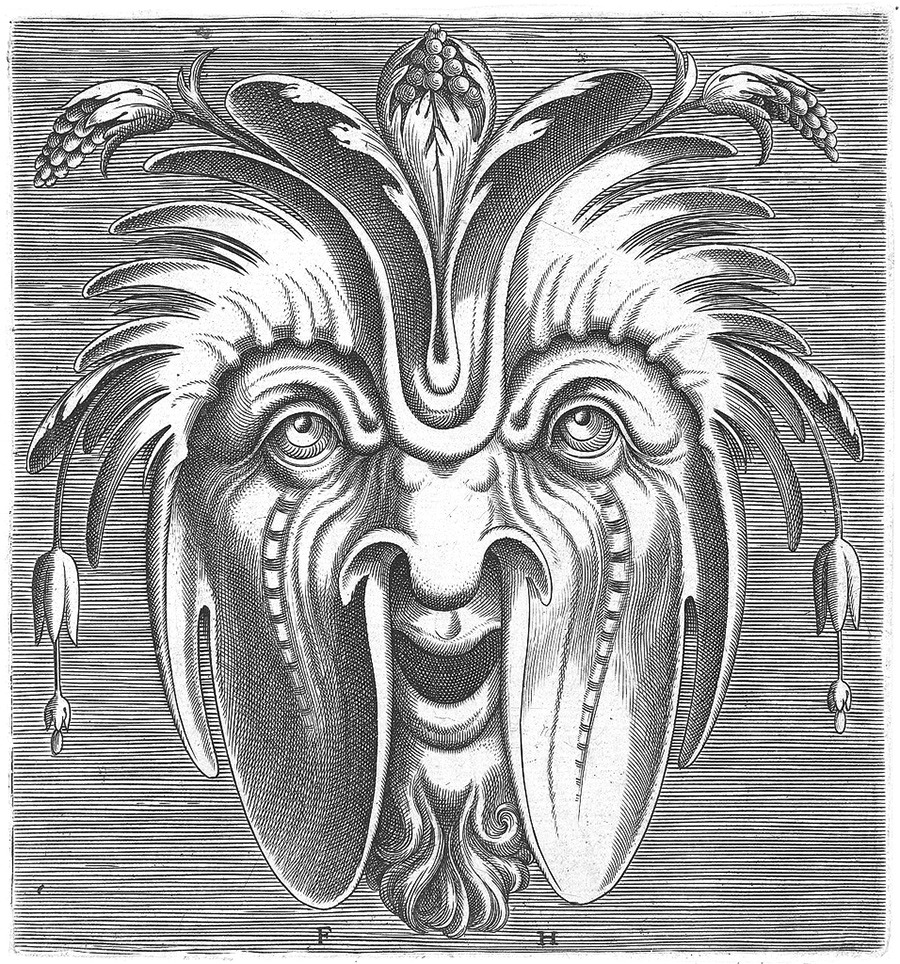

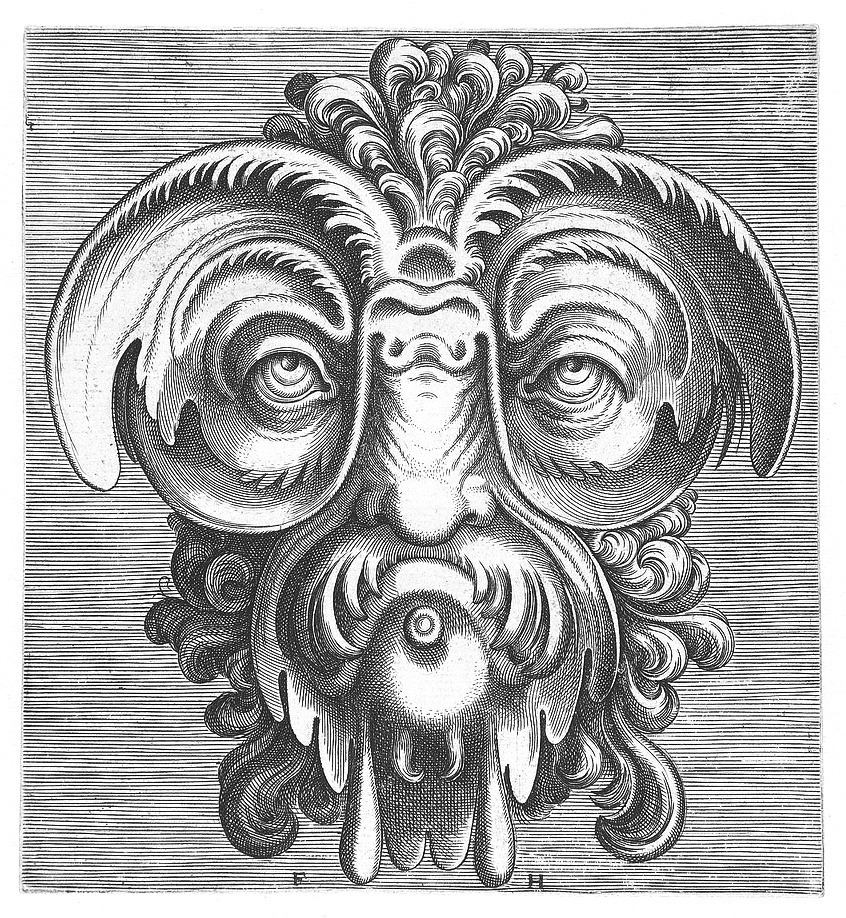

A mask tells us more than a face

From BibliOdyssey

Folkert

From BibliOdyssey

Folkert

Wood be nice

O projeto traz as imagens para o mundo tangível - a madeira - como uma espécie de transmutação para que possam novamente ou plenamente ser admiradas, tocadas, penduradas em uma parede ou oferecidas de presente.

Graças ao sucesso e repercussão obtidos, outros artistas se uniram recentemente a Daniel e Andrei e criaram extensões naturais da proposta inicial - Bucharest on wood e Killed by the sound - onde além das fotos, colagens de figuras e objetos na madeira procuram materializar ambientes, características e situações da capital, Bucareste. Mosca branca!

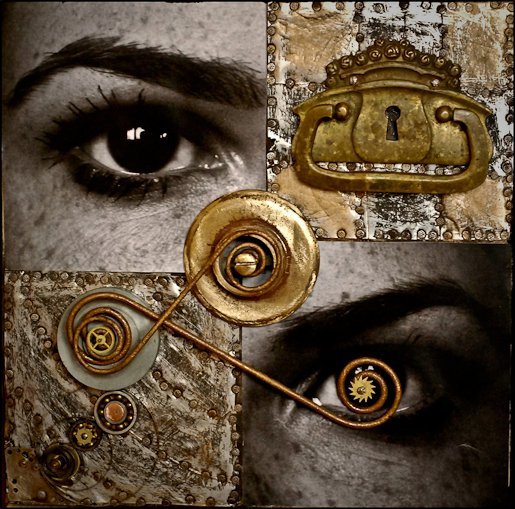

I keep spinning the crystal to see all sides but I can never exactly see the side that is turned away

Photographer via Arpeggia. Title via wood s lot.

Will 50 Watts

Photographer via Arpeggia. Title via wood s lot.

Will 50 Watts

The music of stones, paths of shared footmarks, sleeping by the river’s roar

By way of wowgreat

Folkert

By way of wowgreat

Folkert

Festival de luzes no inverno do Japão

Com início neste mês, o Winter Illumination é um espetáculo de luzes instalado no Jardim Botânico Nabana no Sato, em Kuwana – Nagashima.

A estrela do show é a caminhada no túnel de luzes, que envolve completamente os espectadores, como se estivessem entrando em um portal mágico.

O tema deste ano é Natureza e promete cenas belíssimas como, por exemplo, o nascer do Sol no Monte Fuji, arco-íris e a formação de aurora boreal.

Se acontecer de você estar no Japão até 31 de Março, lembre-se de vivenciar o Winter Illuminations.

* Todas as fotos por Bunnyojisan

| via

.

Fermi may be seeing a 6 GeV WIMP, too

According to the internal counter, this text is the 5,000th published blog entry on this blog.

According to the internal counter, this text is the 5,000th published blog entry on this blog.At a Fermi Telescope Symposium, the Fermi Collaboration revealed its opinion about the celebrated Christoph Weniger's \(130\GeV\) line:

Search for gamma-ray spectral lines in the Milky Way diffuse with the Fermi Large Area Telescope (PDF by Andrea Albert)As Jester mentions, the glass they offer is half-full or half-empty, according to your personal taste. There's some confessed excess near \(135\GeV\), which is their new central value of the energy after some adjustments, but they only admit a 2+ or 3+ sigma result, depending on whether they zoom near the Galactic center.

Moreover, they see "similar" excesses at other frequencies located in other portions of the sky and the would-be signal near \(135\GeV\) is less continuous than the dark matter interpretation would suggest.

But there's something else interesting in the data.

Look at this graph:

It has some excesses and deficits. There's some 2.5-sigma deficit at \(E=35\GeV\) but what is even more intriguing is the near-3-sigma excess at \(E=6\GeV\). If the WIMP dark matter particle had this mass, it could pair-annihilate into two photons of the same energy.

And a WIMP of the mass slightly below \(10\GeV\) is suggested by the Dark Matter Is Seen (DMIS) allies among the dark matter direct search experimental teams. Could it be compatible? Could they hint at the same light dark matter particle?

Recall that, as the chart above implies, there's some disagreement between the Dark Matter Is Seen allies concerning the mass and cross section of the would-be WIMP. In particular, CoGeNT could be kind of compatible with \(6\GeV\), or at least \(6.5\GeV\) is within its 1-sigma zone. The other DMIS allies prefer somewhat higher masses around \(10\GeV\).

Recall that Fermi has been a peripheral warrior for the DMIS coalition in the Is Dark Matter Seen War for more than a year as it has backed up some PAMELA's claims about the positron fraction (for higher energies than \(10\GeV\)).

Fermi on the Fermi line

-

Recall that Fermi's previous line search in 2-years data didn't report any signal. Actually, neither does the new 4-years one, if Fermi's a-priori optimized search regions are used. In particular, the significance of the bump near 130 GeV in the 12x10 degree box around the galactic center is merely 2.2 sigma. There is no outright contradiction with the theorist's analyses, as th e latter employ different, more complicated search regions. In fact, if Fermi instead focuses on a smaller 4x4 degree box around the galactic center, they see a signal with 3.35 sigma local significance (after reprocessing data, the significance would be 4 sigma without reprocessing). This is the first time the Fermi collaboration admits seeing a feature that could possibly be a signal of dark matter annihilation.

Recall that Fermi's previous line search in 2-years data didn't report any signal. Actually, neither does the new 4-years one, if Fermi's a-priori optimized search regions are used. In particular, the significance of the bump near 130 GeV in the 12x10 degree box around the galactic center is merely 2.2 sigma. There is no outright contradiction with the theorist's analyses, as th e latter employ different, more complicated search regions. In fact, if Fermi instead focuses on a smaller 4x4 degree box around the galactic center, they see a signal with 3.35 sigma local significance (after reprocessing data, the significance would be 4 sigma without reprocessing). This is the first time the Fermi collaboration admits seeing a feature that could possibly be a signal of dark matter annihilation. - Another news is that the 130 GeV line has been upgraded to a 135 GeV line: it turns out that reprocessing the data shifted the position of the bump. That should make little difference to dark matter models explaining the line signal, but in any case you should expect another round of theory papers fitting the new number ;-)

-

Unfortunately, Fermi also confirms the presence of a 3 sigma line near 130 GeV in the Earth limb data (where there should be none). Fermi assigns the effect to a 30% dip in detection efficiency in the bins above and below 130 GeV. This dip cannot by itself explain the 135 GeV signal from the galactic center. However, it may be that the line is an unlucky fluctuation on top of the instrumental effect due to the dip.

Unfortunately, Fermi also confirms the presence of a 3 sigma line near 130 GeV in the Earth limb data (where there should be none). Fermi assigns the effect to a 30% dip in detection efficiency in the bins above and below 130 GeV. This dip cannot by itself explain the 135 GeV signal from the galactic center. However, it may be that the line is an unlucky fluctuation on top of the instrumental effect due to the dip. - Fermi points out a few other details that may be worrisome. They say there's some indication that the 135 GeV feature is not as smooth as expected if it were due to dark matter. They find bumps of similar significance at other energies and other places in the sky. Also, the significance of the 135 GeV signal drops when reprocessed data and more advanced line-fitting techniques are used, while one would expect the opposite if the signal is of physical origin.

- A fun fact for dessert. The strongest line signal that Fermi finds is near 5 GeV and has 3.7 sigma local significance (below 3 sigma with the look-elsewhere effect taken into account). 5 GeV dark matter could fit the DAMA and CoGeNT direct detection, if you ignore the limits from the Xenon and CDMS experiments. Will the 5 GeV line prove as popular with theorists as the 130 GeV one?

Snapshot: Gene Clark, Emmylou Harris and Eddie Tickner

Live Squid Preparation

Rafa Spoladore ΨDelicia, assim você me mata!

Shocking content warning

Random image from fukung.net: 3457456ef662e8ab6ff04e91d79dc8ab.png

photos by Nino Migliori

Rafa Spoladore ΨBelas Imagens.